Using Mixedbread.ai embedding model with support for binary vectors

Check out the amazing blog post: Binary and Scalar Embedding Quantization for Significantly Faster & Cheaper Retrieval

Binarization is significant because:

Binarization reduces the storage footprint from 1024 floats (4096 bytes) per vector to 128 int8 (128 bytes).

32x less data to store

Faster distance calculations using hamming distance, which Vespa natively supports for bits packed into int8 precision. More on hamming distance in Vespa.

Vespa supports hamming distance with and without hnsw indexing.

For those wanting to learn more about binary vectors, we recommend our 2021 blog series on Billion-scale vector search with Vespa and Billion-scale vector search with Vespa - part two.

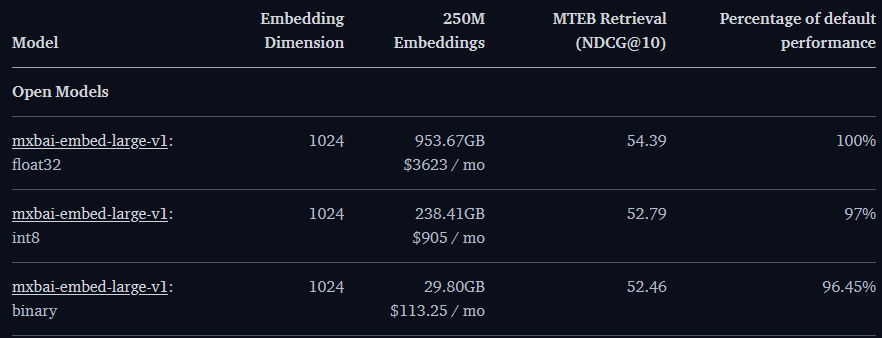

This notebook demonstrates how to use the Mixedbread mixedbread-ai/mxbai-embed-large-v1 model with support for binary vectors with Vespa. The notebook example also includes a re-ranking phase that uses the float query vector version for improved accuracy. The re-ranking step makes the model perform at 96.45% of the full float version, with a 32x decrease in storage footprint.

Install the dependencies:

[ ]:

!pip3 install -U pyvespa sentence-transformers

Examining the embeddings using sentence-transformers

Read the blog post for sentence-transformer usage.

sentence-transformer API. Model card: mixedbread-ai/mxbai-embed-large-v1.

Load the model using the sentence-transformers library:

[1]:

from sentence_transformers import SentenceTransformer

model = SentenceTransformer(

"mixedbread-ai/mxbai-embed-large-v1",

prompts={

"retrieval": "Represent this sentence for searching relevant passages: ",

},

default_prompt_name="retrieval",

)

Default prompt name is set to 'retrieval'. This prompt will be applied to all `encode()` calls, except if `encode()` is called with `prompt` or `prompt_name` parameters.

Some sample documents

Define a few sample documents that we want to embed

[4]:

documents = [

"Alan Turing was an English mathematician, computer scientist, logician, cryptanalyst, philosopher and theoretical biologist.",

"Albert Einstein was a German-born theoretical physicist who is widely held to be one of the greatest and most influential scientists of all time.",

"Isaac Newton was an English polymath active as a mathematician, physicist, astronomer, alchemist, theologian, and author who was described in his time as a natural philosopher.",

"Marie Curie was a Polish and naturalised-French physicist and chemist who conducted pioneering research on radioactivity",

]

Run embedding inference, notice how we specify precision="binary".

[5]:

binary_embeddings = model.encode(documents, precision="binary")

[8]:

print(

"Binary embedding shape {} with type {}".format(

binary_embeddings.shape, binary_embeddings.dtype

)

)

Binary embedding shape (4, 128) with type int8

Definining the Vespa application

First, we define a Vespa schema with the fields we want to store and their type.

Notice the binary_vector field that defines an indexed (dense) Vespa tensor with the dimension name x[128].

The indexing statement includes index which means that Vespa will use HNSW indexing for this field.

Also notice the configuration of distance-metric where we specify hamming.

[9]:

from vespa.package import Schema, Document, Field, FieldSet

my_schema = Schema(

name="doc",

mode="index",

document=Document(

fields=[

Field(

name="doc_id",

type="string",

indexing=["summary", "index"],

match=["word"],

rank="filter",

),

Field(

name="text",

type="string",

indexing=["summary", "index"],

index="enable-bm25",

),

Field(

name="binary_vector",

type="tensor<int8>(x[128])",

indexing=["attribute", "index"],

attribute=["distance-metric: hamming"],

),

]

),

fieldsets=[FieldSet(name="default", fields=["text"])],

)

We must add the schema to a Vespa application package. This consists of configuration files, schemas, models, and possibly even custom code (plugins).

[15]:

from vespa.package import ApplicationPackage

vespa_app_name = "mixedbreadai"

vespa_application_package = ApplicationPackage(name=vespa_app_name, schema=[my_schema])

In the last step, we configure ranking by adding rank-profile’s to the schema.

unpack_bits unpacks the binary representation into a 1024-dimensional float vector doc.

We define two tensor inputs, one compact binary representation that is used for the nearestNeighbor search and one full version that is used in ranking.

[16]:

from vespa.package import RankProfile, FirstPhaseRanking, SecondPhaseRanking, Function

rerank = RankProfile(

name="rerank",

inputs=[

("query(q_binary)", "tensor<int8>(x[128])"),

("query(q_full)", "tensor<float>(x[1024])"),

],

functions=[

Function( # this returns a tensor<float>(x[1024]) with values -1 or 1

name="unpack_binary_representation",

expression="2*unpack_bits(attribute(binary_vector)) -1",

)

],

first_phase=FirstPhaseRanking(

expression="closeness(field, binary_vector)" # 1/(1 + hamming_distance). Calculated between the binary query and the binary_vector

),

second_phase=SecondPhaseRanking(

expression="sum( query(q_full)* unpack_binary_representation )", # re-rank using the dot product between float query and the unpacked binary representation

rerank_count=100,

),

match_features=["distance(field, binary_vector)"],

)

my_schema.add_rank_profile(rerank)

Deploy the application to Vespa Cloud

With the configured application, we can deploy it to Vespa Cloud. It is also possible to deploy the app using docker; see the Hybrid Search - Quickstart guide for an example of deploying it to a local docker container.

Install the Vespa CLI using homebrew - or download a binary from GitHub as demonstrated below.

[ ]:

!brew install vespa-cli

Alternatively, if running in Colab, download the Vespa CLI:

[ ]:

import os

import requests

res = requests.get(

url="https://api.github.com/repos/vespa-engine/vespa/releases/latest"

).json()

os.environ["VERSION"] = res["tag_name"].replace("v", "")

!curl -fsSL https://github.com/vespa-engine/vespa/releases/download/v${VERSION}/vespa-cli_${VERSION}_linux_amd64.tar.gz | tar -zxf -

!ln -sf /content/vespa-cli_${VERSION}_linux_amd64/bin/vespa /bin/vespa

To deploy the application to Vespa Cloud we need to create a tenant in the Vespa Cloud:

Create a tenant at console.vespa-cloud.com (unless you already have one). This step requires a Google or GitHub account, and will start your free trial. Make note of the tenant name, it is used in the next steps.

Configure Vespa Cloud date-plane security

Create Vespa Cloud data-plane mTLS cert/key-pair. The mutual certificate pair is used to talk to your Vespa cloud endpoints. See Vespa Cloud Security Guide for details.

We save the paths to the credentials for later data-plane access without using pyvespa APIs.

[ ]:

import os

os.environ["TENANT_NAME"] = "vespa-team" # Replace with your tenant name

vespa_cli_command = (

f'vespa config set application {os.environ["TENANT_NAME"]}.{vespa_app_name}'

)

!vespa config set target cloud

!{vespa_cli_command}

!vespa auth cert -N

Validate that we have the expected data-plane credential files:

[19]:

from os.path import exists

from pathlib import Path

cert_path = (

Path.home()

/ ".vespa"

/ f"{os.environ['TENANT_NAME']}.{vespa_app_name}.default/data-plane-public-cert.pem"

)

key_path = (

Path.home()

/ ".vespa"

/ f"{os.environ['TENANT_NAME']}.{vespa_app_name}.default/data-plane-private-key.pem"

)

if not exists(cert_path) or not exists(key_path):

print(

"ERROR: set the correct paths to security credentials. Correct paths above and rerun until you do not see this error"

)

Note that the subsequent Vespa Cloud deploy call below will add data-plane-public-cert.pem to the application before deploying it to Vespa Cloud, so that you have access to both the private key and the public certificate. At the same time, Vespa Cloud only knows the public certificate.

Configure Vespa Cloud control-plane security

Authenticate to generate a tenant level control plane API key for deploying the applications to Vespa Cloud, and save the path to it.

The generated tenant api key must be added in the Vespa Console before attemting to deploy the application.

To use this key in Vespa Cloud click 'Add custom key' at

https://console.vespa-cloud.com/tenant/TENANT_NAME/account/keys

and paste the entire public key including the BEGIN and END lines.

[ ]:

!vespa auth api-key

from pathlib import Path

api_key_path = Path.home() / ".vespa" / f"{os.environ['TENANT_NAME']}.api-key.pem"

Deploy to Vespa Cloud

Now that we have data-plane and control-plane credentials ready, we can deploy our application to Vespa Cloud!

PyVespa supports deploying apps to the development zone.

Note: Deployments to dev and perf expire after 7 days of inactivity, i.e., 7 days after running deploy. This applies to all plans, not only the Free Trial. Use the Vespa Console to extend the expiry period, or redeploy the application to add 7 more days.

[22]:

from vespa.deployment import VespaCloud

def read_secret():

"""Read the API key from the environment variable. This is

only used for CI/CD purposes."""

t = os.getenv("VESPA_TEAM_API_KEY")

if t:

return t.replace(r"\n", "\n")

else:

return t

vespa_cloud = VespaCloud(

tenant=os.environ["TENANT_NAME"],

application=vespa_app_name,

key_content=read_secret() if read_secret() else None,

key_location=api_key_path,

application_package=vespa_application_package,

)

Now deploy the app to Vespa Cloud dev zone.

The first deployment typically takes 2 minutes until the endpoint is up.

[23]:

from vespa.application import Vespa

app: Vespa = vespa_cloud.deploy()

Deployment started in run 1 of dev-aws-us-east-1c for samples.mixedbreadai. This may take a few minutes the first time.

INFO [22:14:39] Deploying platform version 8.322.22 and application dev build 1 for dev-aws-us-east-1c of default ...

INFO [22:14:39] Using CA signed certificate version 0

INFO [22:14:46] Using 1 nodes in container cluster 'mixedbreadai_container'

INFO [22:15:18] Session 2205 for tenant 'samples' prepared and activated.

INFO [22:15:21] ######## Details for all nodes ########

INFO [22:15:35] h90193a.dev.aws-us-east-1c.vespa-external.aws.oath.cloud: expected to be UP

INFO [22:15:35] --- platform vespa/cloud-tenant-rhel8:8.322.22 <-- :

INFO [22:15:35] --- logserver-container on port 4080 has not started

INFO [22:15:35] --- metricsproxy-container on port 19092 has not started

INFO [22:15:35] h90971b.dev.aws-us-east-1c.vespa-external.aws.oath.cloud: expected to be UP

INFO [22:15:35] --- platform vespa/cloud-tenant-rhel8:8.322.22 <-- :

INFO [22:15:35] --- container-clustercontroller on port 19050 has not started

INFO [22:15:35] --- metricsproxy-container on port 19092 has not started

INFO [22:15:35] h91168a.dev.aws-us-east-1c.vespa-external.aws.oath.cloud: expected to be UP

INFO [22:15:35] --- platform vespa/cloud-tenant-rhel8:8.322.22 <-- :

INFO [22:15:35] --- storagenode on port 19102 has not started

INFO [22:15:35] --- searchnode on port 19107 has not started

INFO [22:15:35] --- distributor on port 19111 has not started

INFO [22:15:35] --- metricsproxy-container on port 19092 has not started

INFO [22:15:35] h91567a.dev.aws-us-east-1c.vespa-external.aws.oath.cloud: expected to be UP

INFO [22:15:35] --- platform vespa/cloud-tenant-rhel8:8.322.22 <-- :

INFO [22:15:35] --- container on port 4080 has not started

INFO [22:15:35] --- metricsproxy-container on port 19092 has not started

INFO [22:16:41] Waiting for convergence of 10 services across 4 nodes

INFO [22:16:41] 1/1 nodes upgrading platform

INFO [22:16:41] 2 application services still deploying

DEBUG [22:16:41] h91567a.dev.aws-us-east-1c.vespa-external.aws.oath.cloud: expected to be UP

DEBUG [22:16:41] --- platform vespa/cloud-tenant-rhel8:8.322.22 <-- :

DEBUG [22:16:41] --- container on port 4080 has not started

DEBUG [22:16:41] --- metricsproxy-container on port 19092 has not started

INFO [22:17:11] Found endpoints:

INFO [22:17:11] - dev.aws-us-east-1c

INFO [22:17:11] |-- https://cf949f23.b8a7f611.z.vespa-app.cloud/ (cluster 'mixedbreadai_container')

INFO [22:17:12] Installation succeeded!

Using mTLS (key,cert) Authentication against endpoint https://cf949f23.b8a7f611.z.vespa-app.cloud//ApplicationStatus

Application is up!

Finished deployment.

Feed our sample documents and their binary embedding representation

With few documents, we use the synchronous API. Read more in reads and writes.

[24]:

from vespa.io import VespaResponse

for i, doc in enumerate(documents):

response: VespaResponse = app.feed_data_point(

schema="doc",

data_id=str(i),

fields={

"doc_id": str(i),

"text": doc,

"binary_vector": binary_embeddings[i].tolist(),

},

)

assert response.is_successful()

Querying data

Read more about querying Vespa in:

In this case, we use quantization.quantize_embeddings after first obtaining the float version, this to avoid running the model inference twice.

[54]:

query = "Who was Isac Newton?"

# This returns the float version

query_embedding_float = model.encode([query])

[ ]:

from sentence_transformers.quantization import quantize_embeddings

query_embedding_binary = quantize_embeddings(query_embedding_float, precision="binary")

Now, we use nearestNeighbor search to retrieve 100 hits (targetHits) using the configured distance-metric (hamming distance). The retrieved hits are exposed to the ‹espa ranking framework, where we re-rank using the dot product between the float tensor and the unpacked binary vector.

[55]:

response = app.query(

yql="select * from doc where {targetHits:100}nearestNeighbor(binary_vector,q_binary)",

ranking="rerank",

body={

"input.query(q_binary)": query_embedding_binary[0].tolist(),

"input.query(q_full)": query_embedding_float[0].tolist(),

},

)

assert response.is_successful()

[56]:

import json

print(json.dumps(response.hits, indent=2))

[

{

"id": "id:doc:doc::2",

"relevance": 177.8957977294922,

"source": "mixedbreadai_content",

"fields": {

"matchfeatures": {

"closeness(field,binary_vector)": 0.003484320557491289,

"distance(field,binary_vector)": 286.0

},

"sddocname": "doc",

"documentid": "id:doc:doc::2",

"doc_id": "2",

"text": "Isaac Newton was an English polymath active as a mathematician, physicist, astronomer, alchemist, theologian, and author who was described in his time as a natural philosopher."

}

},

{

"id": "id:doc:doc::1",

"relevance": 144.52731323242188,

"source": "mixedbreadai_content",

"fields": {

"matchfeatures": {

"closeness(field,binary_vector)": 0.002890173410404624,

"distance(field,binary_vector)": 345.0

},

"sddocname": "doc",

"documentid": "id:doc:doc::1",

"doc_id": "1",

"text": "Albert Einstein was a German-born theoretical physicist who is widely held to be one of the greatest and most influential scientists of all time."

}

},

{

"id": "id:doc:doc::0",

"relevance": 138.78799438476562,

"source": "mixedbreadai_content",

"fields": {

"matchfeatures": {

"closeness(field,binary_vector)": 0.00273224043715847,

"distance(field,binary_vector)": 365.0

},

"sddocname": "doc",

"documentid": "id:doc:doc::0",

"doc_id": "0",

"text": "Alan Turing was an English mathematician, computer scientist, logician, cryptanalyst, philosopher and theoretical biologist."

}

},

{

"id": "id:doc:doc::3",

"relevance": 115.2405776977539,

"source": "mixedbreadai_content",

"fields": {

"matchfeatures": {

"closeness(field,binary_vector)": 0.002652519893899204,

"distance(field,binary_vector)": 376.0

},

"sddocname": "doc",

"documentid": "id:doc:doc::3",

"doc_id": "3",

"text": "Marie Curie was a Polish and naturalised-French physicist and chemist who conducted pioneering research on radioactivity"

}

}

]

Summary

Binary embeddings is an exciting development, as it reduces storage (32) and speed up vector searches as the hamming distance is much more efficient than distance metrics like angular or euclidean.

Clean up

We can now delete the cloud instance:

[ ]:

vespa_cloud.delete()